In just a few years, the AI world has gone from model demos and viral chatbots to something far more brutal: a full-on infrastructure arms race. Every major tech giant is pouring unheard-of amounts of cash into chips, data centers, and power just to stay in the game. Over the last 48 hours alone, Nvidia smashed through the $5 trillion mark, Meta quietly locked in a huge chip deal with Amazon, and Oracle secured $16 billion to build a mega–AI campus in Michigan for OpenAI. The “Agentic Era” isn’t a buzzword anymore—it’s a multi-trillion-dollar buildout happening in real time.

1. The $5 Trillion King: Nvidia Turns Chips into a Story About Power

Nvidia has officially become the first chipmaker in history to cross the $5 trillion market capitalization milestone, briefly touching above $5.1 trillion and making it the most valuable listed company on the planet. Its stock jumped over 4% on the day of the breakout, capping a run where the company’s value has risen more than 14x since the end of 2022, fueled almost entirely by demand for AI compute. For context, Nvidia is now worth more than Apple, Alphabet, and Amazon individually, and sits ahead of all of them on market cap leaderboards.

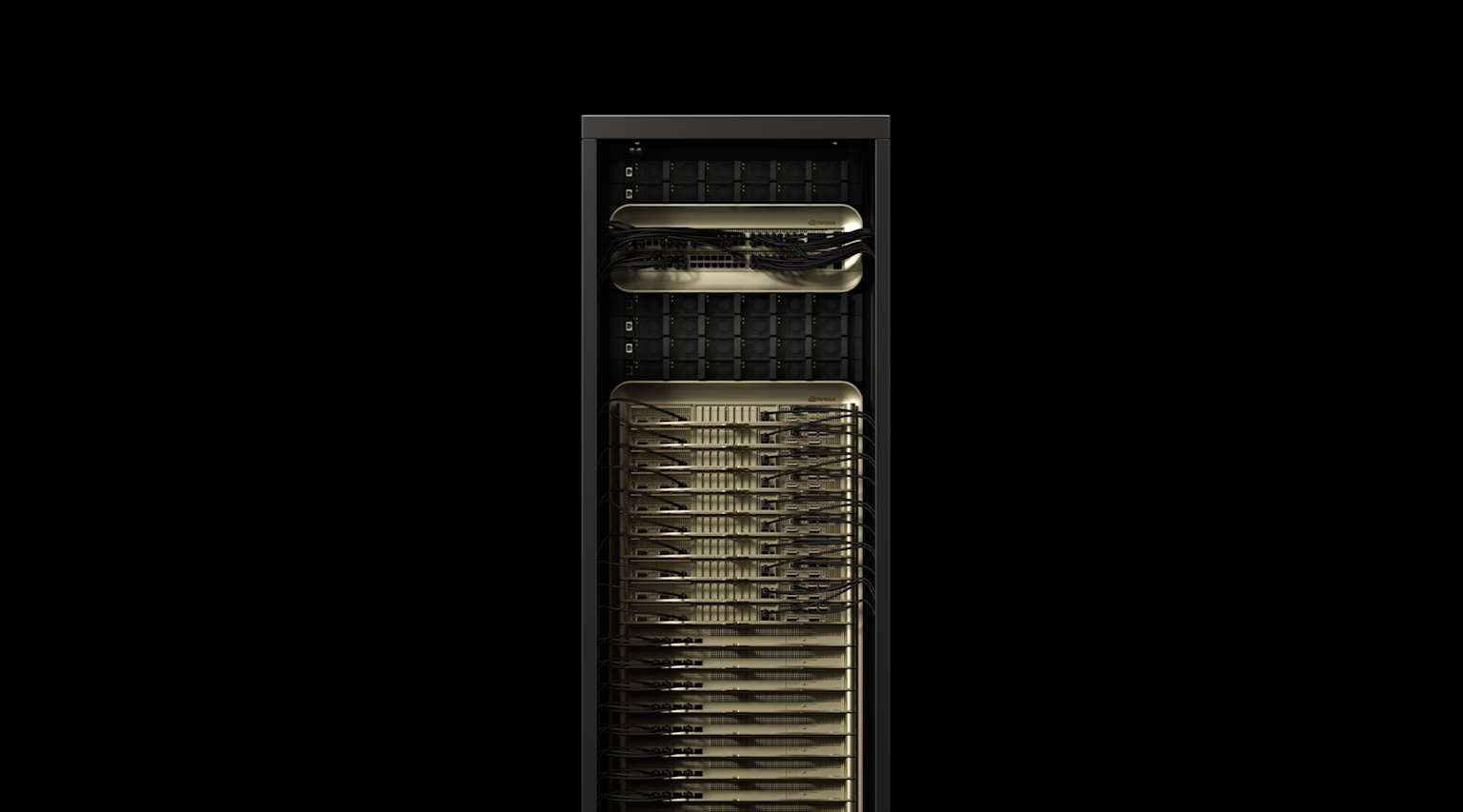

Behind the chart is a simple story: if you want to train cutting-edge models in 2026, you are probably using Nvidia’s H100, H200, or the new Blackwell chips—and you are probably struggling to get enough of them. Cloud giants like Microsoft, Amazon, and Google are still hoarding top-tier GPUs for their own platforms and for elite partners like OpenAI and Anthropic, leaving everyone else to fight over whatever capacity remains. Startups, meanwhile, are discovering that having a good idea is not enough; you also need a reservation on someone’s GPU cluster.

“We’re no longer in a software race; we’re in a physical infrastructure arms race where every rack, every megawatt, and every GPU allocation decides who gets to build the future.”

That’s why Nvidia’s valuation matters. It’s a reflection of a deeper reality: every major tech company now markets itself as “AI-first,” but only a few can actually secure the hardware needed to live up to that slogan. The result is a stratified ecosystem where hyperscalers move ahead with frontier models, while smaller players are pushed to optimize ruthlessly—training smaller, smarter models, compressing weights, and exploring alternative hardware just to survive.

2. Meta × Amazon: A Quiet but Massive Bet on Custom Silicon

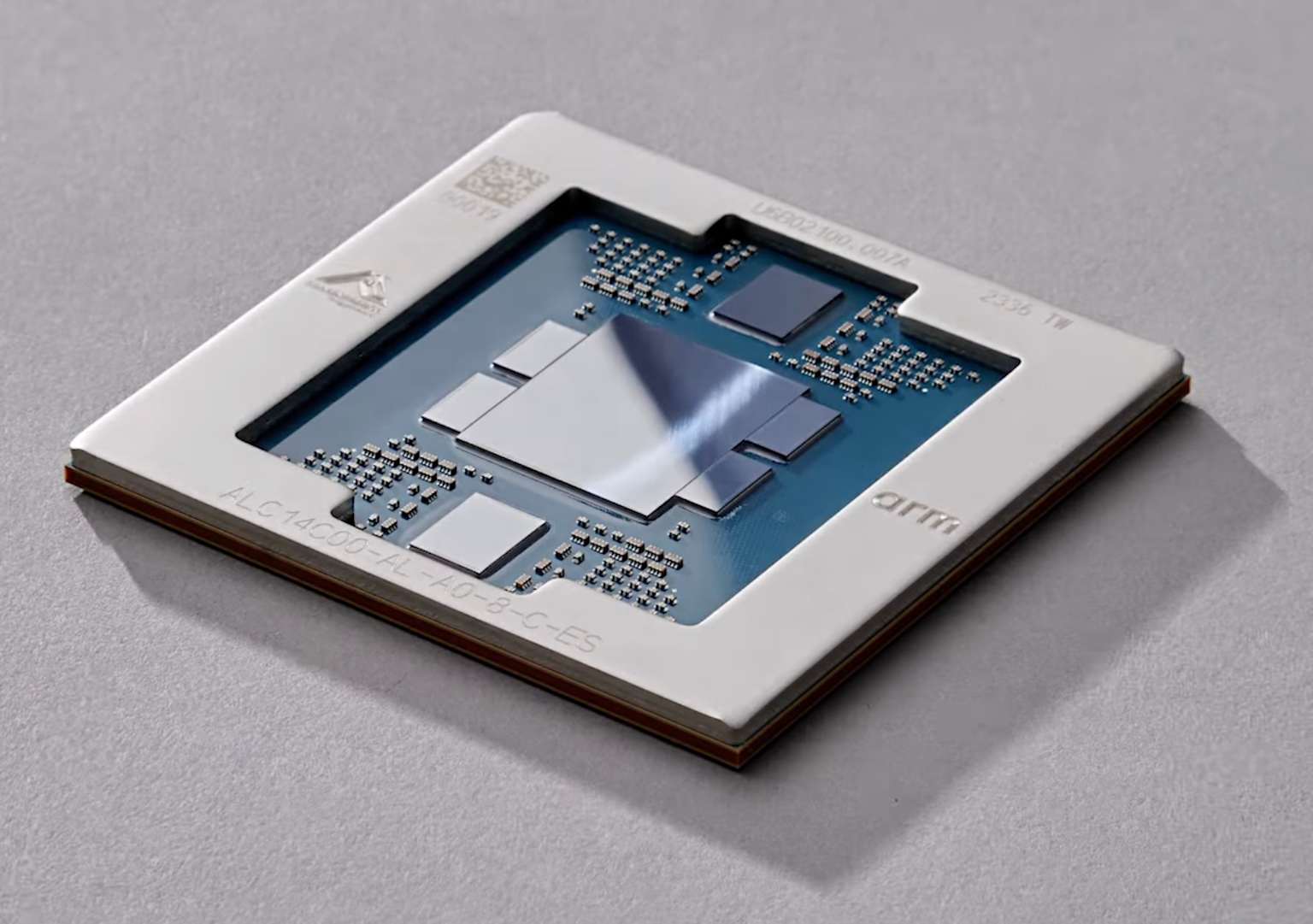

While Nvidia dominates the training stack, the inference side of AI—actually answering billions of user queries every day—is becoming a second battlefield. To avoid relying 100% on Nvidia, Meta has signed a multiyear, multibillion-dollar deal with Amazon Web Services to use its Graviton chips at scale. This agreement gives Meta access to tens of millions of Graviton5 CPU cores over several years, and it’s explicitly aimed at supporting Meta’s rapidly growing AI workloads.

Graviton chips are custom Arm-based processors designed by Amazon to deliver better price–performance for cloud workloads, especially high-volume inference and general compute tasks. By offloading a significant portion of inference traffic to Graviton, Meta can reserve its precious Nvidia H100s and future Blackwell GPUs for training the next generations of Llama models and ad-ranking systems. In other words, Meta is splitting its AI stack: custom silicon for day-to-day answers, premium GPUs for frontier research.

Key Takeaways from the Meta–Amazon Deal

- Inference at scale: Graviton5 offers strong price–performance for large-scale inference, helping Meta serve AI features to billions of users without blowing up its cloud bill.

- Supply chain diversification: Meta reduces its dependency on Nvidia’s GPU supply, which remains constrained and expensive, while it continues to develop its own MTIA accelerator chips internally.

- Cloud competition: For Amazon, landing Meta as a Graviton customer is a huge signal—AWS is not just selling storage and compute anymore; it’s becoming a serious alternative AI silicon platform alongside Nvidia and Google’s TPUs.

3. OpenAI’s $16B Michigan Mega-Campus: Where the Next Models Will Live

On the other side of the stack, OpenAI is scaling up in a different way: by locking in entire data centers. A project in Saline Township, Michigan, led by Related Digital and Oracle, has secured around $16 billion in financing for a new Oracle data center campus, built specifically to power AI applications—including workloads from OpenAI. The campus, nicknamed “The Barn,” will consist of multiple single-story data center buildings with more than a gigawatt of capacity, closed-loop cooling, and planned LEED certification.

Oracle executives describe the site as a cornerstone of America’s next-generation AI infrastructure, combining large-scale compute with an emphasis on local job creation and long-term regional investment. For OpenAI, this type of dedicated capacity is essential if it wants to support not only ChatGPT and API demand today, but also future models like a rumored GPT‑5.5 or real-time multimodal systems with persistent memory and “live” world understanding. The Michigan build is part of a broader wave: across the U.S., hyperscalers are committing hundreds of billions to data centers, often running into power grid bottlenecks and community pushback along the way.

ChatGPT Images 2.0: “Images with Thinking”

At the same time, OpenAI has rolled out ChatGPT Images 2.0, powered by the gpt-image-2 model, which introduces what the company calls “thinking capabilities” for image generation. Instead of instantly spitting out a picture, the model can enter a “thinking mode” where it breaks the prompt down into steps, plans composition and layout, and iterates before returning the final images. This upgrade dramatically improves spatial reasoning, text rendering, and fine-grained details like hands, dense scenes, or UI mockups.

Under the hood, this means significantly more compute per request. In thinking mode, gpt-image-2 can generate and critique multiple candidates, maintain multi-image consistency, and support higher resolutions up to 2K. That kind of workload doesn’t just need clever algorithms—it needs massive, always-on infrastructure, which circles back to why projects like the Michigan campus are being financed at tens of billions of dollars.

| Company | Recent Strategic Move | Estimated Investment / Value |

|---|---|---|

| Nvidia | Crossed $5T market cap as AI GPU demand surged. | ≈ $5.05 trillion market capitalization. |

| OpenAI + Oracle | Financing and construction of a Michigan AI mega–data center campus. | $16 billion in project financing. |

| Meta | Multiyear deal to use AWS Graviton chips for AI workloads. | “Multibillion-dollar” multi-year agreement. |

| Hyperscalers (Meta, Amazon, Microsoft, Alphabet, Oracle) | Planned AI infrastructure and data center capex through 2026. | ≈ $720 billion combined AI capex by 2026. |

4. The $720B Question: Is This Sustainable?

Zooming out, analysts now estimate that five major hyperscalers—Meta, Amazon, Microsoft, Alphabet, and Oracle—are on track to spend roughly $720 billion on AI infrastructure and related capex by the end of 2026. That figure covers GPUs and custom chips, new and expanded data centers, networking, and the power systems needed to keep everything running 24/7. It’s an enormous, front-loaded bet that assumes AI revenue and monetization will catch up later.

The risk, sometimes called the “AI capex trap,” is that the cost of building this compute empire runs ahead of the near-term revenue from AI products. While usage is exploding, many AI features are still bundled into existing products (like search, ads, or cloud contracts) rather than sold as clearly priced add-ons. If monetization lags too far behind, some companies could find themselves owning incredibly advanced infrastructure that is underutilized—or priced too cheaply to justify its cost on the balance sheet.

On the other hand, if AI truly becomes as foundational as mobile or the internet itself, this period might look, in hindsight, like the early days of laying fiber for the web. The difference is the scale and speed: we’ve never seen anything like hundreds of billions of dollars pointed at one technology wave in such a short window. For now, the market is still rewarding aggression, but the bill is coming due over the next two to three years.

Frequently Asked Questions

What is “images with thinking” in ChatGPT Images 2.0?

Launched in April 2026, ChatGPT Images 2.0 (gpt-image-2) introduces a “thinking mode” where the model plans before it draws. Instead of generating an image in one shot, it decomposes the prompt, reasons about object placement, composition, and text, then iterates internally before returning the final outputs. This leads to fewer errors with hands, text, and complex layouts, but it also increases the compute required per image compared to earlier, simpler models.

Why is Meta using Amazon chips instead of relying only on its own hardware?

Meta is indeed developing its own MTIA accelerator chips, but those designs take years to mature and scale globally. In the meantime, Meta’s AI usage is exploding across products like Reels, feeds, Llama-based assistants, and ad ranking, so it needs immediate, reliable capacity. Renting AWS’s Graviton5 chips through a multibillion-dollar, multiyear deal gives Meta a cost-effective way to handle inference at scale today, while its in-house hardware roadmap continues in parallel.

Is there really an AI chip and compute shortage in 2026?

Yes. Despite record production, the highest-end AI GPUs and accelerators remain heavily supply-constrained. Hyperscalers like Microsoft, Amazon, Google, and Meta are reserving vast amounts of capacity to support their own models and a small set of top-tier partners such as OpenAI and Anthropic. That leaves many startups and smaller enterprises in what’s often called a “compute crunch,” forcing them either to queue up for capacity, pay premium prices, or redesign their models to run efficiently on more available, lower-tier hardware.

References

- CNBC – Nvidia stock closes at record, pushing market cap past $5 trillion

- Economic Times – Nvidia becomes first company to cross $5 trillion market cap

- CompaniesMarketCap – Nvidia (NVDA) market capitalization data

- Bloomberg – Oracle data center nears $16 billion financing for Michigan campus

- Blackstone / Related Digital – Financing announcement for $16B Oracle data center project

- MacRumors – OpenAI launches ChatGPT Images 2.0 with thinking capabilities

- BuildFastWithAI – Developer breakdown of ChatGPT Images 2.0 (gpt-image-2)

- AIAutomationGlobal – ChatGPT Images 2.0: native reasoning and thinking mode

- TechEconomy – Meta signs multibillion-dollar chip deal with Amazon (Graviton5)

- MEXC – Meta signs multibillion-dollar deal to use Amazon chips for AI

- AInvest – AI Capex Flow: $720B to chips, grid bottlenecks, and stock reactions

- TechMacroArchive – Big Tech AI Capex ROI: the $720B gamble

- Yahoo Finance – Google takes on Nvidia with new AI chips