Hey there, fellow tech enthusiasts! If you blinked over the past few days, you might have missed one of the most exciting accelerations in the AI race yet. Just this week in April 2026, OpenAI dropped GPT-5.5, pushing the boundaries toward truly autonomous "agentic" AI, while Google responded with its April "Gemini Drop" — a sweeping set of multimodal upgrades that make everyday creativity and productivity feel almost magical.

These aren't just incremental tweaks. We're witnessing a fundamental shift: from AI that answers questions to AI that can plan, execute, and collaborate like a skilled teammate. Whether you're a developer debugging complex code or a creator turning personal memories into art, these tools are reshaping how we work and create. Let's dive deep into what happened, why it matters, and what it could mean for all of us.

1. OpenAI's GPT-5.5: From Chatbot to Autonomous Collaborator

On April 23, 2026, OpenAI officially released GPT-5.5, describing it as their "smartest and most intuitive" model yet.

The model powers **Codex**, OpenAI's agentic coding application, which is already transforming developer workflows. Early internal deployment at NVIDIA gave over 10,000 employees access, and the results have been impressive: debugging cycles that once took days are now wrapping up in hours.

Running on NVIDIA’s powerful **GB200 NVL72** rack-scale infrastructure, GPT-5.5 delivers dramatic performance gains: up to **50x higher token output per second** and **35x lower inference costs** compared to previous generations. This hardware synergy makes large-scale, sustained agentic work economically viable for enterprises.

"GPT-5.5 delivers the sustained performance required for execution-heavy work... It’s more than faster coding—it’s a new way of working that helps people operate at a fundamentally different speed." — Justin Boitano, VP of Enterprise AI at NVIDIA.

Key Highlights of GPT-5.5

- Stronger Agentic Capabilities: Better at long-horizon reasoning, tool use, and persisting through complex, multi-step problems like research or full-feature development from natural language prompts.

- Enterprise-Ready Efficiency: Co-designed with NVIDIA hardware for massive speed and cost improvements, enabling real-world deployment at scale.

- Enhanced Safeguards: Rolled out with stronger safety measures, including a new privacy-focused approach for sensitive data handling.

- Availability: Immediately available to ChatGPT Plus, Pro, Business, and Enterprise users (with GPT-5.5 Pro for the most demanding tasks). API access followed shortly after with additional safeguards.

2. Google’s April 2026 Gemini Drop: Creativity, Personalization, and Multimodal Magic

Google didn't sit idle. In their April 2026 "Gemini Drop," they rolled out a rich suite of updates focused on making Gemini more personal, creative, and useful across devices.

The standout is **Nano Banana 2**, an advanced image generation model that taps into your Google Photos and "Personal Intelligence" to create images that truly reflect *your* life, style, and memories. It's no longer generic AI art — it's personalized visuals drawn from your own context.

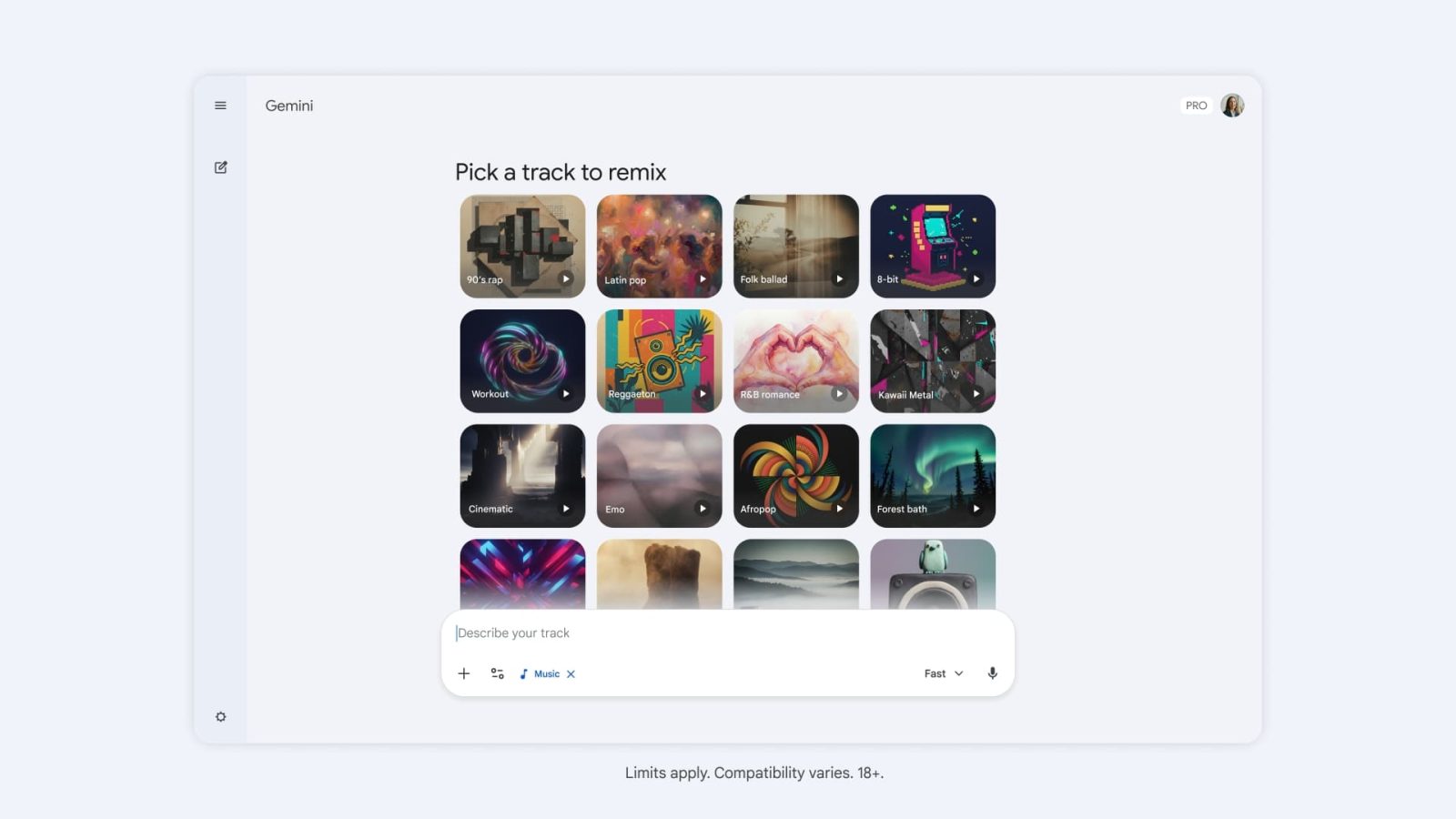

On the audio side, **Lyria 3 Pro** now lets users create high-fidelity music tracks up to **3 minutes long** for free, with advanced mixing and customization options. This opens exciting doors for hobbyists and creators alike.

Additional highlights include:

- 3D model generation and interactive charts directly in the Gemini app — perfect for researchers, educators, and designers.

- Expanded "Help me write" in Google Docs with "Match Writing Style" support.

- Official Gemini app launch for Mac.

- Deeper integration of NotebookLM for project organization.

| Feature | What's New in April 2026 |

|---|---|

| Image Generation | Nano Banana 2 + Personal Intelligence using your Google Photos for hyper-personalized results. |

| Music Creation | Lyria 3 Pro — free tracks up to 3 minutes with advanced mixing. |

| Productivity | "Match Writing Style" in Docs; NotebookLM integration; 3D models & interactive charts. |

| Platforms | Official Gemini Mac app; broader Personal Intelligence rollout. |

3. Apple’s AI Evolution Under New Leadership: Hardware Meets Intelligence

In a move that signals Apple's serious push into the AI era, the company announced that **John Ternus**, current Senior VP of Hardware Engineering, will become CEO effective September 1, 2026, with Tim Cook transitioning to Executive Chairman.

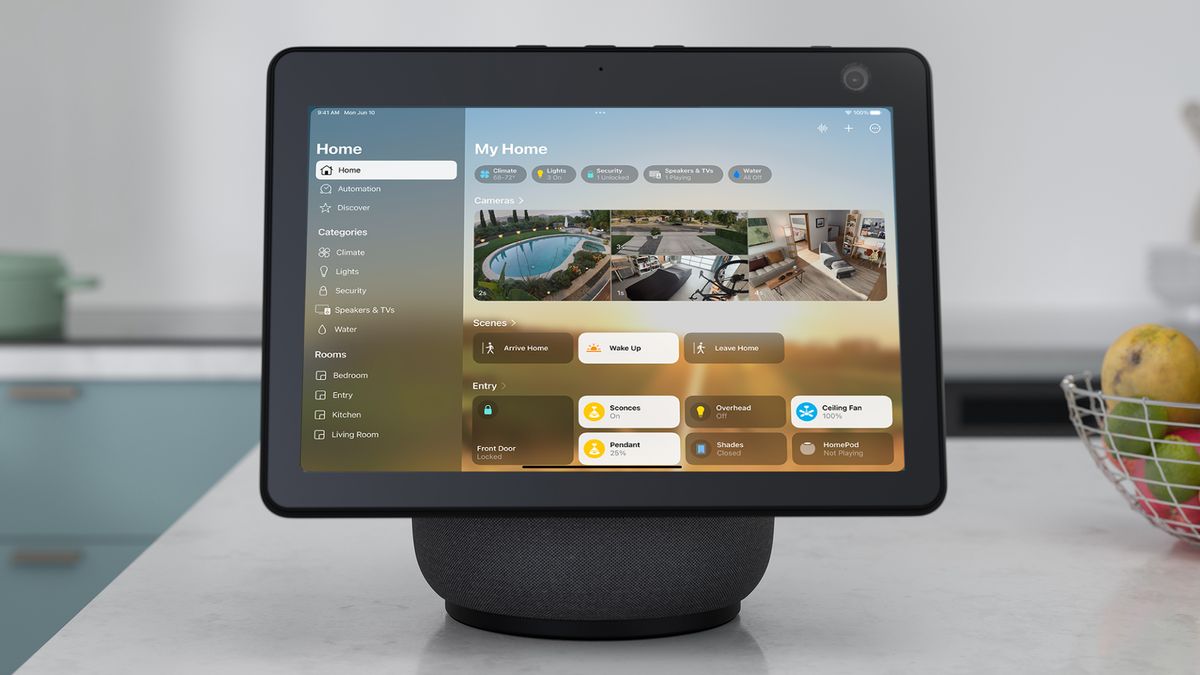

Under Ternus, Apple is reportedly accelerating "Apple Intelligence" with new hardware categories. Rumors point to a modular **Apple Home Hub** — possibly a tablet-like device with advanced robotics (including concepts featuring a robotic arm) — that leverages powerful foundation models for context-aware home assistance. There's also talk of deeper integration with partners like Google for complex reasoning in the upcoming Siri overhaul on iOS 27, while keeping core privacy features on-device.

Frequently Asked Questions

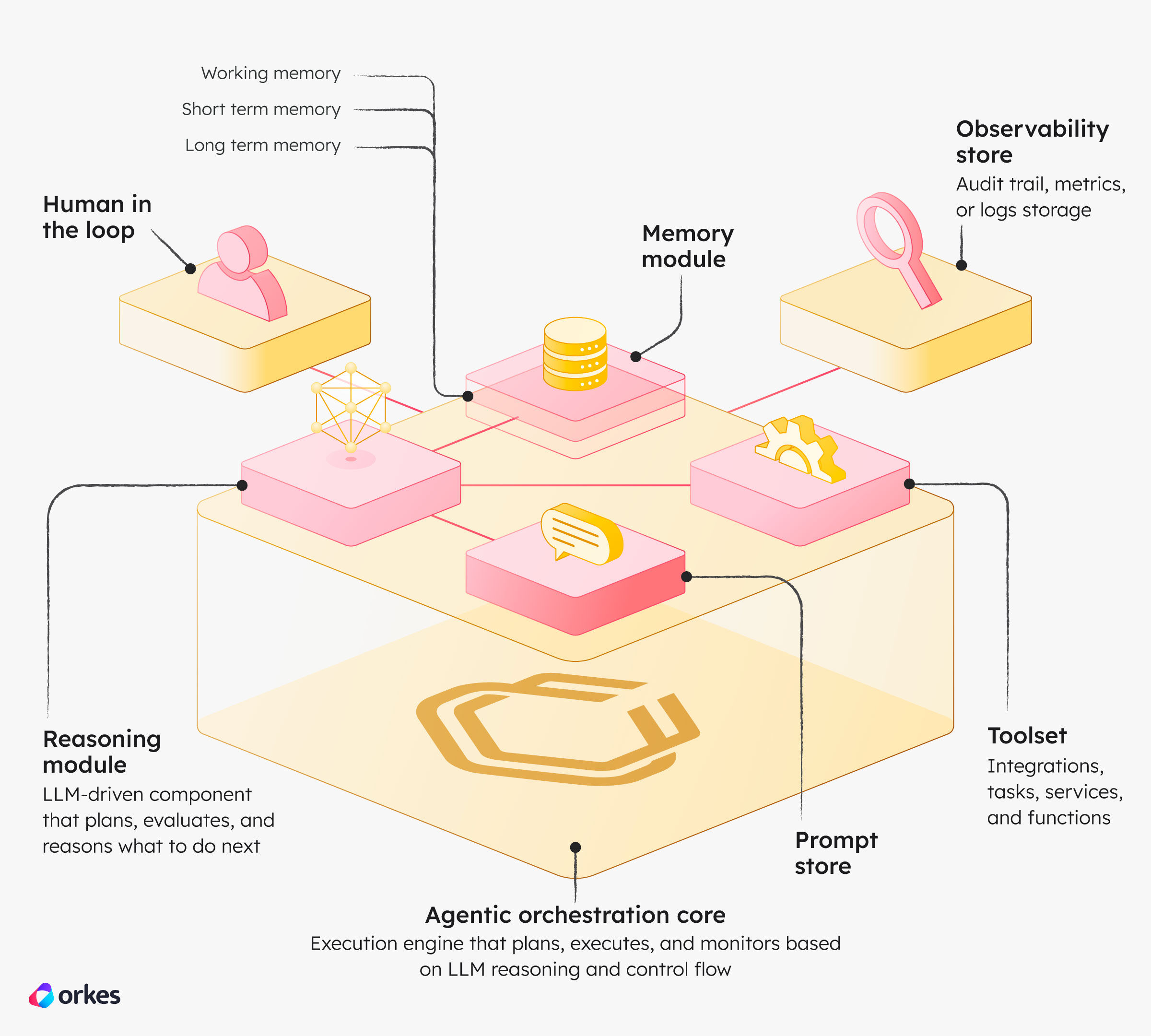

What exactly makes an AI "agentic" like GPT-5.5?

Agentic AI goes beyond answering questions. It can break down complex goals into steps, use external tools (code execution, web search, etc.), reflect on results, and iterate autonomously — acting more like a proactive collaborator than a simple assistant.

When can I try the new Gemini features?

Most April 2026 Gemini Drop features, including Nano Banana 2 and Lyria 3 Pro, are rolling out now to eligible users globally. Some writing-style features may start in English with more languages coming soon.

How is Apple incorporating external AI like Google's models?

Reports suggest Apple is partnering for advanced reasoning capabilities in Siri while maintaining strong on-device processing for privacy-sensitive tasks. This hybrid approach helps Apple accelerate without building everything from scratch.

References & Further Reading

What do you think — are we finally at the tipping point where AI agents become everyday tools? Drop your thoughts below. Stay curious, and keep building!