Introduction

In a significant leap forward for the artificial intelligence community, Google DeepMind has officially unveiled Gemma 4, its latest family of open-source AI models. Building upon the foundational research and technological advancements of its Gemini 3 predecessor, Gemma 4 is engineered to redefine the landscape of open AI, offering unparalleled intelligence-per-parameter and robust capabilities for a diverse range of applications. This release marks a pivotal moment, empowering developers and researchers with cutting-edge tools to push the boundaries of AI innovation, particularly in advanced reasoning and agentic workflows.

What is Gemma 4?

Gemma 4 represents Google DeepMind's commitment to fostering an open and collaborative AI ecosystem. Released around April 2-3, 2026, this new iteration of the Gemma family distinguishes itself with an Apache 2.0 license, a move that significantly enhances its accessibility and utility for developers. Unlike previous versions, the more permissive licensing encourages broader adoption and integration into various projects, from academic research to commercial applications. At its core, Gemma 4 is designed to be a highly capable, efficient, and versatile AI model, purpose-built to handle complex tasks and deliver state-of-the-art performance.

Key Features and Capabilities

Gemma 4 stands out with a suite of impressive features that underscore its position as a frontier model in the open AI space. Its multimodal architecture is a cornerstone, allowing it to natively process and understand not just text, but also images and, in its smaller variants (E2B and E4B), audio. This comprehensive input capability enables Gemma 4 to interact with and interpret the world in a more holistic manner, paving the way for more sophisticated and context-aware AI applications.

One of the most remarkable advancements in Gemma 4 is its extended context window, which can accommodate up to 256,000 tokens in its larger models and 128,000 in its smaller counterparts. This expansive context window is crucial for handling long-form content, complex conversations, and intricate data analysis, allowing the model to maintain coherence and draw insights from vast amounts of information. Furthermore, Gemma 4 boasts multilingual support for over 140 languages, ensuring improved localized experiences and global applicability.

Beyond its input modalities and context handling, Gemma 4 is specifically engineered for advanced reasoning and agentic workflows. This means it excels at tasks requiring logical deduction, problem-solving, and the ability to plan and execute multi-step processes. The inclusion of a built-in "thinking mode" and function calling further enhances its utility, enabling developers to create more autonomous and intelligent agents.

Model Variants and Architecture

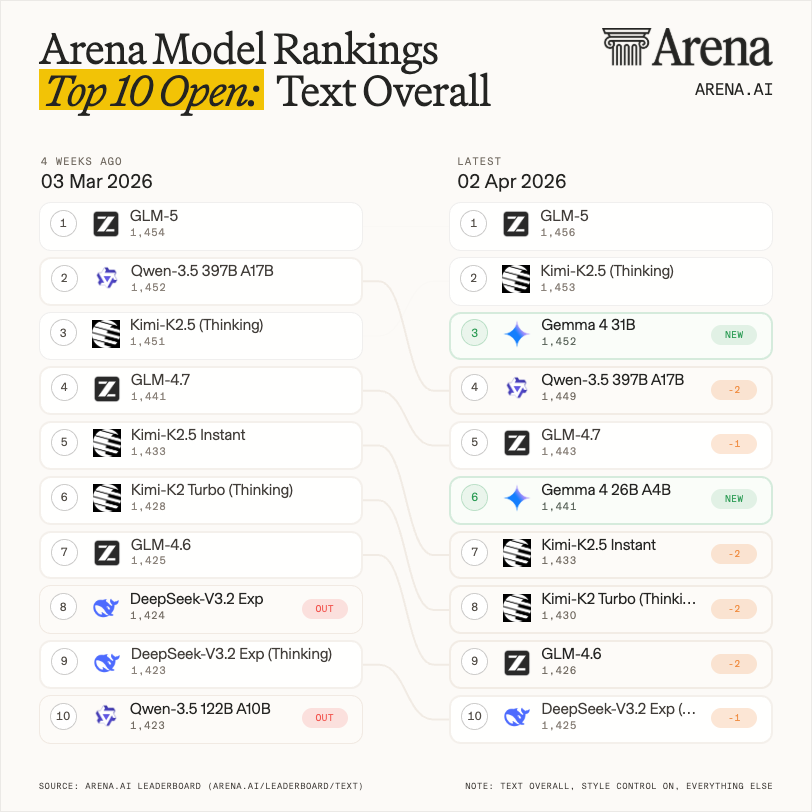

The Gemma 4 family comprises several variants, each optimized for different use cases and computational environments. The flagship model, Gemma 4 31B, is a dense model known for its high efficiency and a 256K context window. There's also mention of a Gemma 4 26B-A4B variant, which likely leverages a Mixture-of-Experts (MoE) architecture, indicated by the "A4B" (active parameters) designation, suggesting a focus on efficiency and performance through sparse activation. The smaller E2B and E4B variants are particularly noteworthy for their ability to process audio alongside text and images, making them ideal for on-device and edge computing applications.

Underpinning these capabilities is a sophisticated architecture. Gemma 4 employs a 5:1 hybrid attention mechanism, combining sliding window attention with global attention. This innovative approach allows the model to efficiently process both local and global dependencies within the input sequence, contributing to its superior performance. The architecture also features a Pre- and Post-norm setup, a classic yet effective normalization technique that aids in stable training and improved model generalization. The unified multimodal architecture ensures seamless integration and processing of diverse data types.

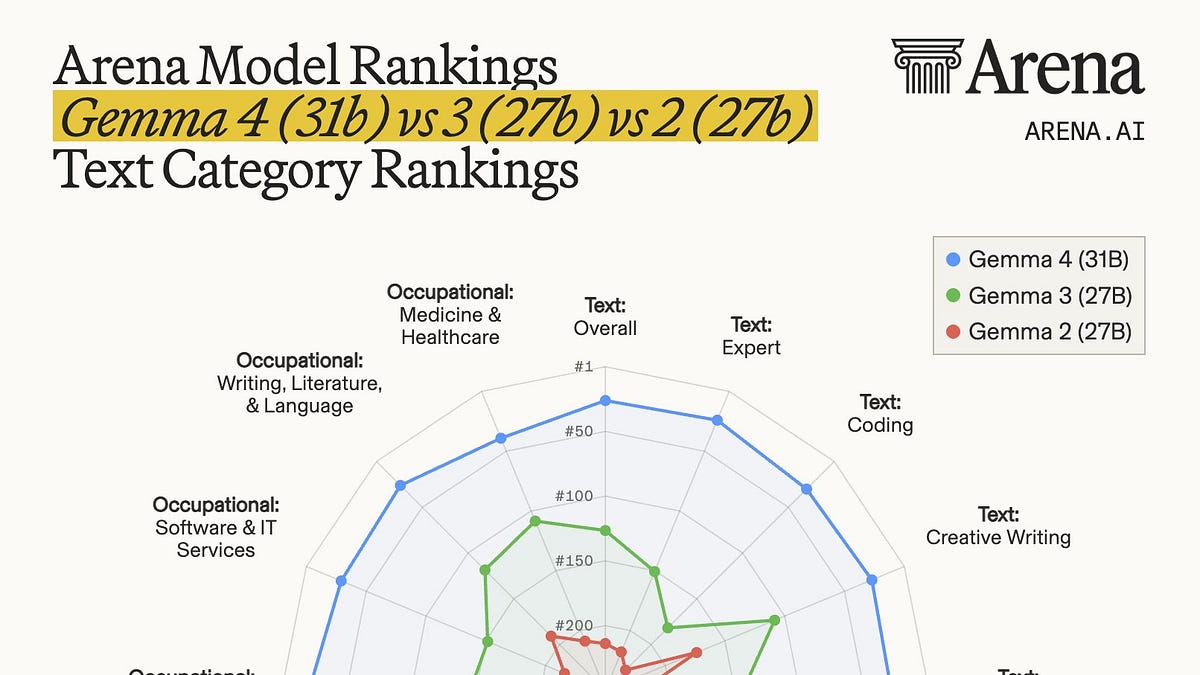

Performance and Benchmarks

Early benchmarks and community feedback highlight Gemma 4's impressive performance. In the Arena ranking, Gemma-4-31B secured the #3 position among open models and #27 overall, a testament to its capabilities against a broad spectrum of AI models. It has been noted for outperforming even larger models in various reasoning and multimodal tasks, demonstrating Google DeepMind's success in maximizing intelligence-per-parameter. The improved multilingual support also translates into better localized experiences, making Gemma 4 a powerful tool for global applications.

Videos on Gemma 4

Get a deeper look at the capabilities and technical breakdowns of Gemma 4 through these selected community videos:

Availability and Ecosystem

Gemma 4 is readily accessible across a variety of platforms, ensuring broad adoption and ease of integration for developers. It is available on popular AI platforms such as Hugging Face, Ollama, and vLLM, providing flexible deployment options. For Android developers, Gemma 4 is integrated into AICore, facilitating on-device AI applications. Furthermore, it is accessible through Google AI for Developers, offering comprehensive documentation and tools for seamless development. The model's support for agents and various inference engines further solidifies its position as a versatile and developer-friendly open AI solution.

The Impact of Gemma 4

The release of Gemma 4 is poised to have a profound impact on the AI landscape. Its open-source nature, coupled with the permissive Apache 2.0 license, is expected to accelerate innovation by making advanced AI capabilities available to a wider audience. Developers can now leverage a state-of-the-art multimodal model for a myriad of applications, from enhancing personal assistants and creating intelligent content generation tools to developing sophisticated agentic systems. The focus on efficiency and on-device capabilities also means that powerful AI can be deployed in environments with limited resources, democratizing access to advanced AI functionalities.

Frequently Asked Questions (FAQs)

What is the main difference between Gemma 4 and its predecessors?

Gemma 4 significantly improves upon its predecessors with enhanced multimodal capabilities (text, image, audio), a much larger context window (up to 256K tokens), and a more permissive Apache 2.0 license, fostering broader adoption and innovation.

Can Gemma 4 be run on local devices?

Yes, Gemma 4 is designed with efficiency in mind, and its smaller variants (E2B and E4B) are particularly suited for on-device and edge computing applications, making it possible to run powerful AI locally.

What kind of applications can be built with Gemma 4?

Gemma 4's advanced reasoning, multimodal understanding, and agentic workflow support make it ideal for applications such as intelligent chatbots, content creation tools, data analysis, multilingual translation, and autonomous agents.

Is Gemma 4 truly open source?

Yes, Gemma 4 is released under the Apache 2.0 license, which is a highly permissive open-source license, allowing for free use, modification, and distribution.

Where can developers find resources to start working with Gemma 4?

Developers can find resources, documentation, and the models themselves on platforms like Hugging Face, Ollama, vLLM, AICore for Android, and the Google AI for Developers portal.

Conclusion

Gemma 4 represents a monumental achievement in the realm of open AI. By combining cutting-edge multimodal capabilities, an expansive context window, and a developer-friendly open-source license, Google DeepMind has delivered a tool that will undoubtedly inspire a new wave of innovation. As developers begin to harness the power of Gemma 4, we can anticipate a future where AI is more intelligent, more accessible, and more seamlessly integrated into our daily lives. The journey of open AI continues, and with Gemma 4, the path forward looks brighter and more promising than ever.

References

- Google DeepMind. (n.d.). Gemma 4. Retrieved from https://deepmind.google/models/gemma/gemma-4/

- Google AI for Developers. (n.d.). Gemma 4 model card. Retrieved from https://ai.google.dev/gemma/docs/core/model_card_4

- Google Blog. (n.d.). Gemma 4: Our most capable open models to date. Retrieved from https://blog.google/innovation-and-ai/technology/developers-tools/gemma-4/

- Hugging Face. (n.d.). Welcome Gemma 4: Frontier multimodal intelligence on device. Retrieved from https://huggingface.co/blog/gemma4

- Interconnects AI. (n.d.). Gemma 4 and what makes an open model succeed. Retrieved from https://www.interconnects.ai/p/gemma-4-and-what-makes-an-open-model

- The Verge. (n.d.). Google's new Gemma 4 'open' AI model sets developers free. Retrieved from https://www.theverge.com/ai-artificial-intelligence/906062/googles-gemma-4-open-ai-model

- Medium. (n.d.). Google Gemma 4 Explained: Why Google's Apache 2.0 Open Model Release Matters for Local AI. Retrieved from https://medium.com/data-and-beyond/google-gemma-4-explained-why-googles-apache-2-0-open-model-release-matters-for-local-ai-d5ba37b5b7eb

- NVIDIA Developer. (n.d.). Bringing AI Closer to the Edge and On-Device with Gemma 4. Retrieved from https://developer.nvidia.com/blog/bringing-ai-closer-to-the-edge-and-on-device-with-gemma-4/

- YouTube. (n.d.). Gemma 4 Has Landed!. Retrieved from https://www.youtube.com/watch?v=5aqF1HVpjdc

- YouTube. (n.d.). Gemma 4 Is INCREDIBLE! Google's Open Model IS .... Retrieved from https://www.youtube.com/watch?v=KW5SFt3rgKo

- YouTube. (n.d.). Google just dropped Gemma 4... (WOAH). Retrieved from https://www.youtube.com/watch?v=BrJdGP21B5g

- YouTube. (n.d.). Google drops Gemma 4, Anthropic's SECRET AI Agent, Qwen 3.6 .... Retrieved from https://www.youtube.com/watch?v=slH-jPY1TgE

- YouTube. (n.d.). You Should Run the Gemma 4 AI Model on Your PC Because .... Retrieved from https://www.youtube.com/watch?v=RoACSQsfeiE